Many things have been written about the infamous #pizzagate conspiracy theory (“scandal” for those who believed in it) and, we are sure, much more will be written in the future. The fact that the outrageous and sick imagination of a few online trolls was able to persuade thousands of people that it was real, and motivate one of them to walk into the Comet Pizza with a loaded gun will be a matter of study of many Psychologists, Sociologists, and Political Scientists. Given that in a few days a workshop will be held in Montreal on Digital Misinformation, we thought that this would be a good time to share some notes of a TwitterTrails story we did.

On December 2, 2016, we did a TwitterTrails investigation collecting Twitter data that contained the hashtag #pizzagate and we present here a few interesting observations about how it spread, who used it first and what were the shape of the community that engaged in the spreading until that time. Below are some of our findings. You can always explore the TwitterTrails story on your own.

Who used the hashtag #pizzagate first?

According to our data, the first mention of #pizzagate was at 8:34 AM GMT on Nov. 6, 2016, two days before the US elections. While the vast majority of people in the US were sleeping, the tweet was sent by a troll that has promoted tens of thousands of provocative lies to its 2 thousand followers. Most of the followers are certainly bots designed to infiltrate online groups willing to believe them — in this case Trump supporters.

If you want to know more about how trolls and spammers are successful in promoting lies, take a look at The Real “Fake News” post by Prof. Eni Mustafaraj.

Who made #pizzagate widely known?

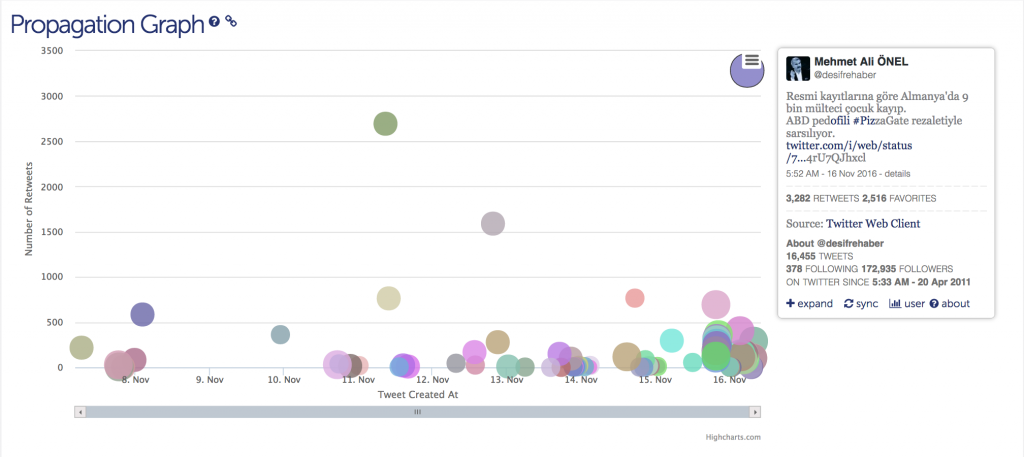

The propagation graph below shows who were the main propagators of a rumor when its activity showed its first “burst”.

Clicking on the (partially covered) purple data point in the upper right corner we find that, surprisingly, the first tweet that had over 3000 retweets belongs to a pro-Erdogan Turkish journalist! According to The Daily Dot columnist Efe Sozeri, at that time, Turkey was outraged by a child abuse scandal and from controversial pending legislation on child marriage and governmental sources were trying to show that their scandal was minor compared to the US scandal.

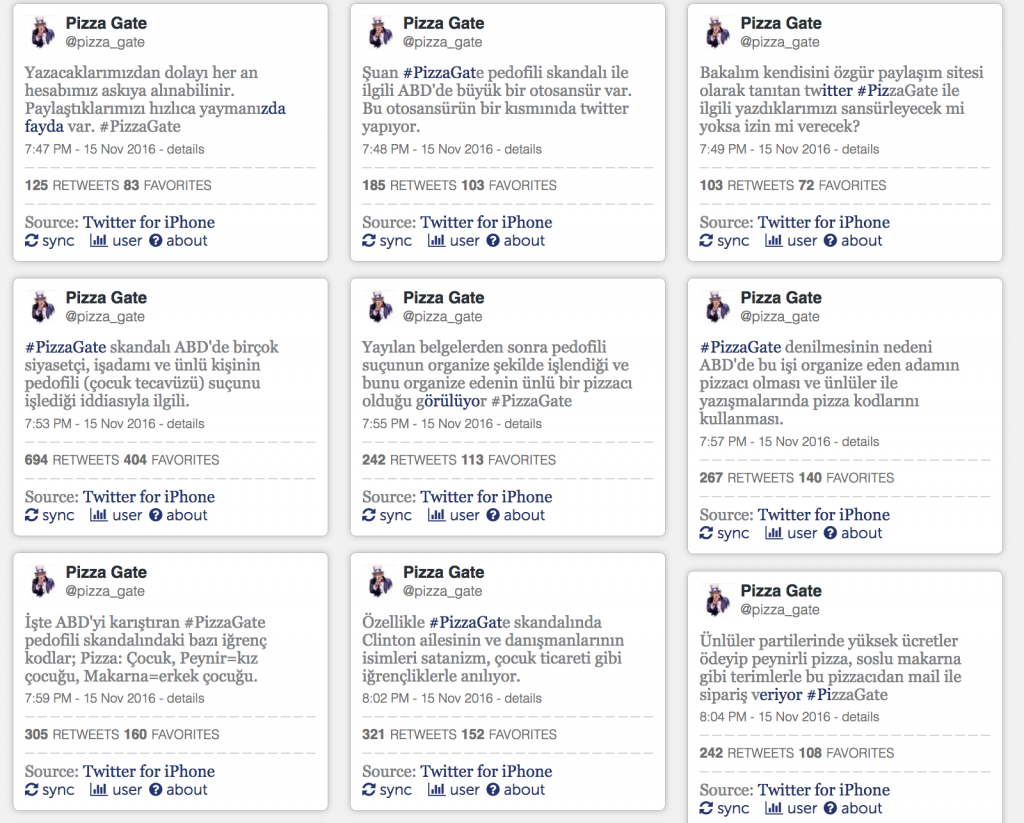

But who informed the Turkish journalist about pizzagate? The propagation graph has some evidence that he was informed by a barrage of tweets that occur a few hours before his posting. The colorful column of data points just before his tweets are by a troll that sending dozens of tweets in Turkish. Here are a few of them as recorded in TwitterTrails:

A deafening echo chamber

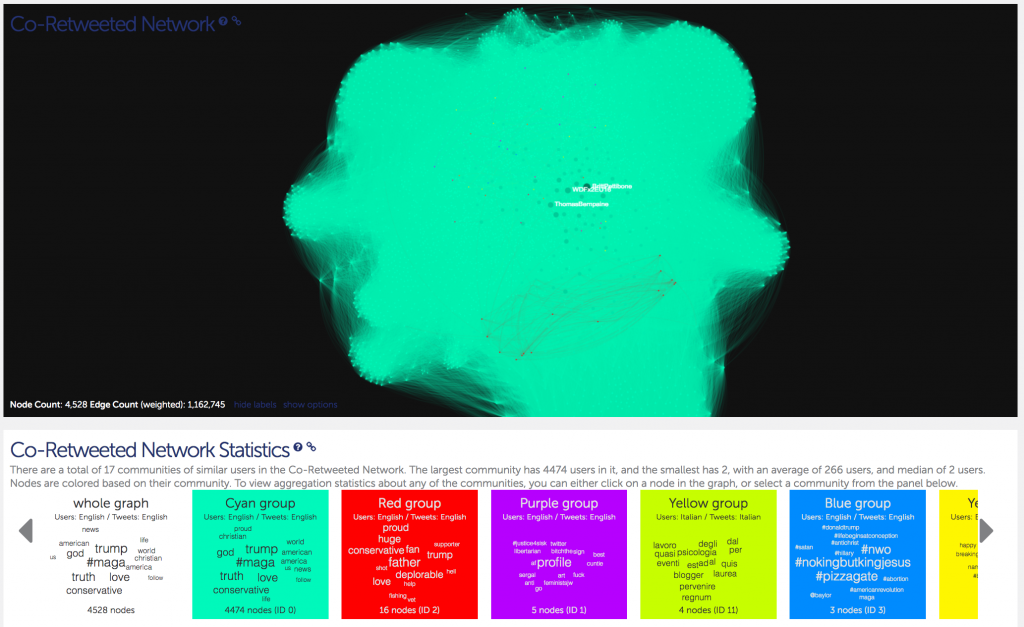

The twitter exchange related to the pizzagate co-retweeted graph shows a dense echo chamber that is just verifying to its participants the validity of the conspiracy and allows no doubt to emerge:

This is the densest echo chamber we have observed on TwitterTrails. Among the 22,000 accounts posting about pizzagate, 4528 of them have risen to prominence being retweeted by at least two other accounts over a million times! Looking at the word cloud that characterizes the cyan group of 4474 participants, we see that the most common words in their profile are #maga, trump, truth [sic], love, god conservative.

How different is this graph from other graphs of political discourse? For comparison, we show what a typical co-retweeted network looks like when discussing political issues. Below is the graph related to the 2016 vice-presidential debate:

In this graph, you can see the two communities, their polarization, and the partial overlap as people read both sides but prefer one of them.

What else can we find?

These are just some of the insights that TwitterTrails can offer to a journalist or anyone who might want to study the propagation of a rumor. If you want to study it further, use TwitterTrails story of the hashtag #pizzagate and send us a comment!